Power Trading Price Forecasting using an LSTM-GRU Ensemble and an XGBoost Residual Correction Model

Abstract

Accurate power price forecasting is vital for market stability, yet conventional models often suffer from time-lag phenomena due to high volatility. To address this, we propose a hybrid model integrating an LSTM-GRU ensemble with an XGBoost-based residual correction mechanism. This two-stage framework first predicts the main trend and then refines the forecast by capturing residual errors. Experimental results demonstrate its superiority, achieving an RMSE of 10.9843 and a R2 score of 0.9282. Notably, the proposed model outperformed not only traditional baselines but also advanced deep learning architectures, including the Transformer (RMSE 12.67) and other hybrid variants (RMSE 12.43). Compared to a single LSTM, the proposed model achieves a 5.21% error reduction. These findings confirm that the explicit residual correction strategy is more effective than merely increasing architectural complexity for enhancing accuracy.

초록

전력 가격의 정확한 예측은 시장 안정화에 필수적이나, 기존 모델들은 높은 변동성으로 인해 시차 현상에 의한 한계를 빈번히 드러낸다. 이를 해결하기 위해, 본 논문은 LSTM-GRU 앙상블과 XGBoost 기반의 잔차 보정 알고리즘을 결합한 하이브리드 모델을 제안한다. 이 모델은 먼저 주 추세를 예측한 후, 잔차 오차를 모델링 하여 최종 예측값을 보정 한다. 실험 결과, 제안된 모델은 RMSE 10.9843 및 R2 0.9282를 기록하며 그 우수성을 입증하였다. 이는 전통적인 시계열 모델 뿐만 아니라 트랜스포머 (RMSE 12.67)와 다른 복합형 하이브리드 모델 (RMSE 12.43) 대비 현저한 성능 향상이며, 단일 LSTM 모델과 비교하여 약 5.21%의 예측 오차를 감소시킨 결과이다. 이 결과는 기존 모델들이 단순히 신경망 구조의 복잡도 증가를 통한 예측 성능 향상을 추진 한 것과 비교하여 본 잔차 보정 모델이 더욱 효율적 모델 임을 입증한다.

Keywords:

power price, hybrid time series models, deep learning, LSTM, GRU, XGBoostⅠ. Introduction

Accurate forecasting of the System Marginal Price (SMP) serves as a cornerstone for efficient power market operations and grid stability. However, the critical challenge in this domain stems from the intrinsic non-linearity and non-stationarity of the price signals themselves. Unlike typical financial time-series, SMP data exhibits abrupt structural changes, high-frequency volatility, and heavy-tailed distributions driven by dynamic bidding strategies and the intermittency of renewable energy. Consequently, traditional modeling approaches that rely on stationarity assumptions are often insufficient to adapt to these stochastic behaviors. Therefore, there is an imperative need to move beyond simple trend estimation and develop robust architectures capable of capturing the complex, time-varying dynamics inherent in power market data[1][2].

Conventional statistical models, such as Auto Regressive Integrated Moving Average (ARIMA), have long served as the standard for time series forecasting. However, due to their linear assumptions, these models are often inadequate for capturing the high volatility and complex non-linear dynamics of power trading prices. Deep learning architectures, notably Long Short-Term Memory (LSTM), have shown significant improvements in handling temporal dependencies[3]. Nevertheless, LSTM models remain susceptible to the time-lag phenomenon, where predictions exhibit a phase delay by simply following the previous time step's value. Consequently, these models often fail to promptly anticipate sudden spikes or structural changes in the market.

To overcome these limitations, this study proposes a novel hybrid forecasting model that integrates an LSTM-GRU ensemble with an XGBoost-based residual correction strategy. Unlike recent approaches that rely on increasing architectural complexity such as Transformers or deep feature fusion models to improve prediction accuracy, our method focuses on explicitly modeling the non-linear residual errors that deep learning models fail to capture. Through comprehensive experiments, we demonstrate that the proposed model significantly outperforms not only traditional time series models but also complex deep learning architectures, offering a more efficient and accurate solution for forecasting high-volatility and non-stationary time series.

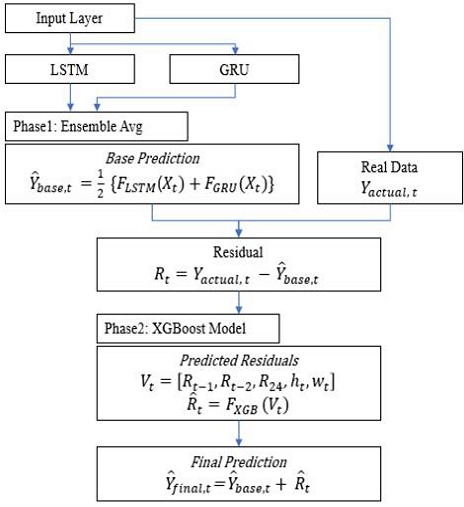

The proposed model operates in a sequential two-stage process. In the first stage, a parallel ensemble of LSTM and Gated Recurrent Unit (GRU) networks predicts the global trend of the power trading price. A key feature of this architecture is the parallel composition based on SMP temporal characteristics, where the outputs are integrated via simple averaging. This averaging mechanism serves to reduce model variance and ensure stable trend detection. In the second stage, an XGBoost regressor captures the non-linear error patterns (residuals) generated by the base ensemble to refine the final output. This residual correction approach enables the model to effectively compensate for the prediction delays inherent in deep learning base model, thereby enhancing the overall prediction performance.

The remainder of this paper is organized as follows: Section 2 reviews related works on deep learning time series models. Section 3 details the proposed hybrid architecture combining LSTM-GRU and XGBoost. Section 4 presents the experimental results, and Section 5 concludes the paper.

Ⅱ. Related Works

2.1 Deep learning for time series forecasting

Recurrent Neural Networks (RNNs) have been fundamental in processing sequential data. However, standard RNNs suffer from the vanishing gradient problem, which hinders their ability to learn long-term dependencies in time series data. To address this, LSTM networks were introduced. LSTM incorporates memory cells and gating mechanisms (input, output, and forget gates) to effectively regulate the flow of information, making it highly suitable for capturing the long-term trends in power trading prices[4].

GRU is a variant of LSTM that simplifies the gating structure by combining the forget and input gates into a single update gate[5]. GRU offers similar performance to LSTM but is computationally more efficient and often converges faster, especially on smaller datasets or when capturing short-term fluctuations. In the domain of time series forecasting, both LSTM and GRU have demonstrated superior performance over traditional statistical models[6].

More recently, the Transformer architecture has emerged as a powerful alternative. Utilizing a self-attention mechanism, Transformers can capture global temporal dependencies and allow for parallel processing, overcoming the sequential bottleneck of RNNs[7]. However, despite their success in various fields, applying Transformers to financial time-series remains challenging. Studies indicate that without sufficient data volume, the complex attention mechanisms may lead to overfitting or fail to capture the local volatility inherent in time series as effectively as the sequential inductive bias of RNNs[8].

2.2 Hybrid and residual correction model

Despite the success of deep learning, single models often struggle to capture both the global trend and local volatility simultaneously. To address this limitation, recent studies have focused on hybrid models that combine different algorithms to leverage their respective strengths[9].

N. Aslam et al.[10] proposed an ensemble LSTM-GRU model for cryptocurrency sentiment analysis, employing a stacked architecture to capture complex sequential patterns. The stacked output is fed into a dense layer to generate the final prediction. However, this structure requires a substantial number of parameters, leading to high computational complexity. A. S. Girsang et. al.[11] proposed a cryptocurrency price prediction model that utilizes a parallel LSTM-GRU architecture similar to our base learner. However, a key structural distinction lies in the aggregation method; while they employed dense layers to combine the branch outputs, our approach adopts a simple averaging and residual correction strategy.

Hybrid architectures including the residual modeling process were implemented to reduce prediction error in non-stationary time series tasks. The theoretical foundation of this approach was rigorously established by G. P. Zhang[12], demonstrating that a single model often fails to capture the linear and non-linear mixed patterns simultaneously. He proposed a hybrid model where a traditional linear model (e.g., ARIMA) first captures the main trend, and a neural network is subsequently used to model the remaining residuals. This decompose-and-ensemble strategy showed that the combined model could outperform either individual component in isolation.

Building on this theoretical basis, H. Liu et al.[13] applied a hybrid ARIMA-ANN approach to wind speed forecasting. Their results verified that the residual learning is crucial for handling the stochastic nature of renewable energy data, significantly reducing the systematic bias left by the primary predictor.

Ⅲ. Proposed Method

3.1 Data description and analysis

Data for this study comprises hourly SMP records covering the extensive period from year 2011 to 2026, acquired from the Korea Electric Power Statistics Information System[14].

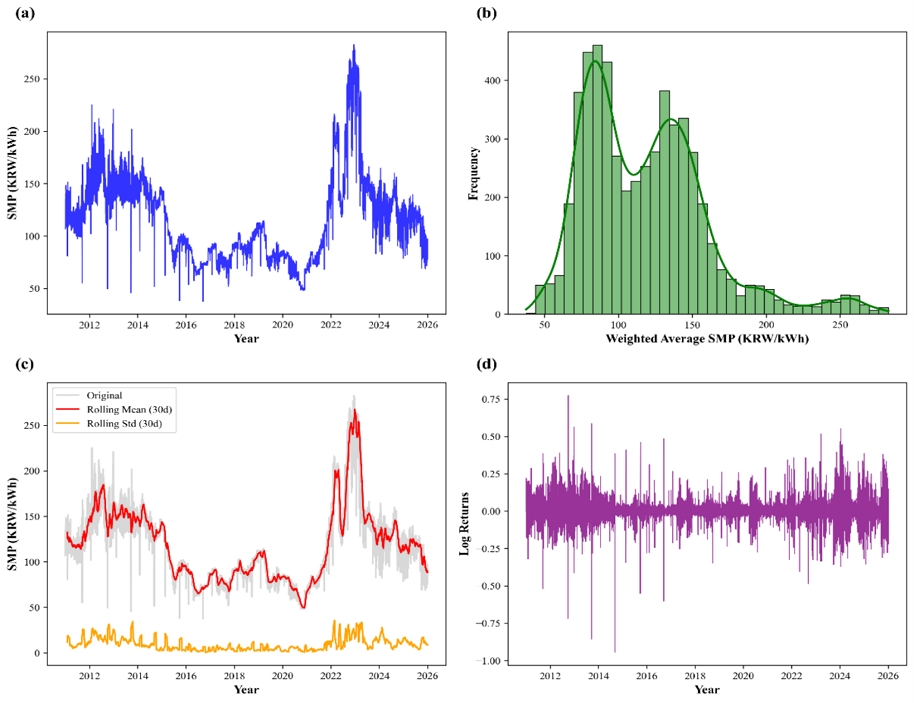

Visual inspection in Figure 1 reveals that the time series is highly volatile and non-stationary, marked by abrupt spikes and precipitous drops reflecting fluctuating power supply and demand. The data also displays distinct seasonality, featuring diurnal cycles (daytime peaks vs. nighttime troughs) and weekly variations. Statistically, the Augmented Dickey-Fuller (ADF) test confirms the presence of a unit root, statistically corroborating the data's non-stationarity.

Hourly SMP data from 2011 to 2026 (a) Daily weighted ave. price (b) Weighted ave. SMP (c) Mean & std. dev. (d) Volatility clustering check

Table 1 summarizes the descriptive statistics of the SMP data set. The skewness of 1.08 indicates a positive skewed distribution, implying frequent upward price spikes. The kurtosis of 1.51 suggests a fat-tail characteristic, confirming the presence of extreme volatility and outliers. Furthermore, the ADF test results confirm that the data is non-stationary.

These characteristics high volatility, non-linearity, and non-stationarity suggest that traditional linear models are insufficient.

3.2 Overall modeling works

The Proposed hybrid model comprises two distinct modules: a base learner and a residual learner. The base learner utilizes an ensemble of LSTM and GRU models to capture the long-term global trend of the power trading price. The residual learner employs the XGBoost algorithm to model the residuals generated by the base learner, thereby correcting the final prediction. Mathematically, the final prediction at time t is defined as the sum of the base prediction and estimated residual :

| (1) |

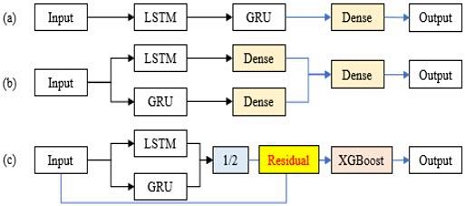

Figure 2 illustrates the structural differences of hybrid forecasting models based on three common strategies for combining RNN models. Figure 2(a)[10] represents a stacked(sequential) architecture where errors from the primary LSTM layer can propagate to the subsequent GRU layer. Figure 2(b)[11] shows a weighted parallel structure using dense layers for aggregation; while flexible, this approach increases computational complexity and the risk of overfitting due to additional trainable parameters.

Schematic comparison of conventional LSTM-GRU hybrids (a) (b), and the proposed hybrid architecture (c)

In contrast, Figure 2(c) depicts the proposed hybrid model adopted in this study. The model first employs a parallel LSTM-GRU architecture with a simple averaging mechanism to establish a stable baseline prediction (Base Learner). Subsequently, the XGBoost component is integrated to refine this baseline by modeling the residuals (Residual Correction). This two-stage design combines the generalization power of the ensemble with the non-linear error correction capability of XGBoost, ensuring both stability and accuracy.

3.3 Base learner: LSTM+GRU ensemble

To enhance the generalization performance and stability of the trend prediction, we adopt an ensemble approach. Two independent RNNs are trained: one based on LSTM architecture FLSTM and the other on GRU architecture FGRU. Both models are trained on the same pre-processed input sequences Xt with a lag of 48 hours. Specifically, the LSTM excels at capturing long-term dependencies through its memory cells, whereas the GRU offers computational efficiency and rapid adaptation to short-term fluctuations due to its simplified gating structure.

Since SMP data exhibits a strong daily (24-hour) periodicity, a 48-hour window allows the model to capture the temporal dynamics of the two full preceding days. To validate this choice, we conducted a sensitivity analysis by varying the sequence length from 24 to 168 hours. As a result, the model achieved the lowest RMSE at t = 48. Shorter sequences failed to capture sufficient historical context, while longer sequences (t > 48) introduced noise and increased computational complexity.

The final base prediction is obtained by averaging the outputs of the LSTM and GRU models. This ensemble strategy mitigates the bias-variance trade-off often found in single models and is expressed as:

| (2) |

3.4 Residual learner: XGBoost

Despite the ensemble approach, deep learning models may still exhibit time-lagging behavior in highly volatile sections. To address this, we define the residual as the difference between the actual value and the base prediction by the LSTM-GRU ensemble process as expressed in Eq. (3)

| (3) |

Further we construct a residual feature vector Vt from the residual containing sparse lags (e.g., 1hour, 2hour, 24hour ago) and temporal features Hour ht and Weekday wt, where ht ∈ {0, 1, ⋯ , 23} denotes the hour of the day (e.g. 0 for 24) and wt ∈ {0, 1, ⋯ , 6} represents the day of the week (e.g. 0 for Monday).

We employed a sparse lag strategy instead of using full sequences. Analyzing the auto-correlation of the residuals revealed that errors are highly correlated with specific time steps: the immediate past (t - 1, t - 2) and the same hour of the previous day (t - 24, t - 48). Therefore, we constructed the input features for XGBoost using these specific high-correlation lags to maximize correction efficiency while minimizing dimensionality.

An XGBoost regressor FXGB is then trained on this residual data set to predict the correction term as shown in Eq. (4).

| (4) |

Finally, the prediction residual from XGBoost is added to the base prediction to produce the corrected final output, as shown in Eq. (1).

The complete process of proposed model is shown in Figure 3.

Ⅳ. Experiments and Results

4.1 Data set and experimental setup

To validate the performance of the proposed model, we utilized hourly power trading price data. The dataset was pre-processed using Min-Max normalization to scale the values between 0 and 1, facilitating stable training of deep learning models. The data was split into a training set 80% and a test set 20%. The input sequence length (lag) for the base learner was set to 48 hours. For the residual learner, we constructed feature vectors using sparse lags for the residual 1hour, 2hour, 24hour ago along with temporal information such as hour and weekday. The experiments were conducted in a Python environment using TensorFlow and XGBoost libraries.

We detail the network architectures and hyperparameter optimization processes for both the base deep learning models and the residual learner. All hyperparameters were determined using a grid search method with 5-fold cross-validation to minimize the Root Mean Squared Error (RMSE) on the validation set. The detailed configuration is summarized in Table 2.

LSTM and GRU networks were implemented using the TensorFlow-Keras framework. For the XGBoost regressor, we optimized key hyperparameters including the number of estimators, maximum tree depth, and learning rate. The grid search revealed that a lower learning rate combined with a higher number of estimators provided the best generalization for residual correction. Specifically, we set the number of estimators to 1000, the maximum depth to 6 to capture non-linear patterns without overfitting, and the learning rate to 0.05.

To comprehensively evaluate the predictive performance and stability of the proposed model, we employed three standard metrics: RMSE, Mean Absolute Error (MAE), and the Coefficient of Determination R2. RMSE was selected as the primary metric because it penalizes larger errors more heavily than smaller ones; this property is particularly crucial in power trading, where accurately predicting extreme price spikes is essential for risk management. MAE was used to provide a straightforward measure of the average magnitude of errors, offering an intuitive interpretation of the expected price deviation in the actual currency unit. Finally, R2 was utilized to assess the proportion of variance in the target variable that is explained by the model, indicating the overall goodness of fit compared to the baseline.

4.2 Experimental results and analysis

Table 3 summarizes the quantitative performance comparison of different forecasting models using RMSE, MAE and R2 score metrics. The experimental results demonstrate that the proposed hybrid residual learning model achieved the highest accuracy across all evaluation metrics. Detailed observations from the experiment are as follows.

First, the traditional statistical model, ARIMA(12, 1, 0), exhibited the poorest performance. This indicates that linear statistical methods fail to capture the high volatility and non-linear dynamics inherent in the power trading price data. Support Vector Regression (SVR) showed a significant improvement over ARIMA, yet it still under-performed compared to deep learning-based approaches.

Second, regarding deep learning architectures, we extended our comparison to include complex models reflecting recent research trends. The Transformer (Self-Attention) model achieved an RMSE of 12.6705 and an R2 of 0.9052. Additionally, we implemented Sequential Stacked LSTM-GRU and Parallel Deep Fusion models, based on the architectural concepts proposed in [10] and [11]. These models, which utilize dense layers for complex feature integration, recorded RMSE values of 12.4615 and 12.4300, respectively.

While these models outperformed the SVR, they notably under-performed compared to the simpler RNN-based models (RMSE ~11.5). This suggests that for the high-volatility nature of SMP data, excessive architectural complexity inherent in both Transformer and the hybrid models proposed in the reference papers may lead to overfitting or optimization difficulties, making them less effective than the simple sequential inductive bias of standard LSTMs.

Third proposed hybrid model ([LSTM-GRU] + XGBoost) outperformed all baseline models. Notably, compared to the single LSTM baseline, the proposed model reduced the RMSE by approximately 5.21% (from 11.5877 to 10.9843). This result empirically confirms that the residual correction strategy using XGBoost effectively minimizes prediction errors that both single deep learning models and complex fusion architectures struggle to capture.

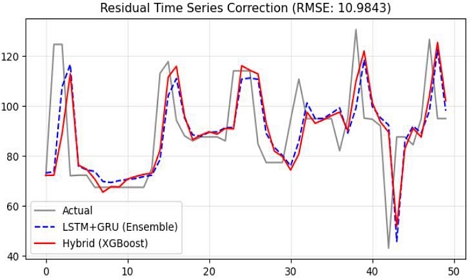

As illustrated in Figure 4, the qualitative comparison highlights the effectiveness of the residual correction strategy. While the LSTM-GRU ensemble (blue dashed line) captures the general trend, it fails to react promptly to sudden price spikes, resulting in a noticeable time-lag. In contrast, the proposed hybrid model (red solid line) successfully better corrects these deviations by integrating the residuals predicted by XGBoost, thereby achieving a tighter alignment with the actual SMP (gray solid line).

Ⅴ. Conclusion

In this paper, we proposed a hybrid forecasting model combining an LSTM-GRU ensemble and an XGBoost-based correction strategy to enhance the prediction accuracy of power trading prices. By leveraging the ensemble of deep learning models for global trend detection and XGBoost for error correction, the proposed model effectively addressed the high volatility and non-stationarity inherent in power market data.

Experimental results confirmed the superiority of the proposed hybrid model across multiple evaluation metrics. The proposed model achieved an RMSE of 10.9843, an MAE of 6.5794, and an R2 score of 0.9282. This performance significantly outperforms other models applied in this work.

The proposed model demonstrated superior accuracy compared to advanced deep learning architectures. It outperformed the Transformer-based self-attention model (RMSE 12.6705) as well as complex hybrid structures, including the Sequential Stacked LSTM-GRU (RMSE 12.4615) and the Parallel Deep Fusion model (RMSE 12.4300). Furthermore, it surpassed standard RNN-based approaches, including the single LSTM (RMSE 11.5877) and the LSTM-GRU ensemble (RMSE 11.4030).

The high R2 score of 0.9282 indicates that the model explains approximately 93% of the variance in price fluctuations. This result empirically proves that the proposed residual correction model is a more effective solution for capturing complex non-linear patterns than merely increasing the depth or architectural complexity of neural networks.

In this study, our primary focus was to achieve high prediction accuracy by effectively modeling the non-linear and non-stationary characteristics inherent in SMP data. By employing the hybrid residual learning strategy, we successfully captured complex volatility patterns and established a robust baseline using uni-variate time-series data. Building upon this foundation, our future work will extend our proposed model to a multivariate environment. We plan to incorporate key exogenous variables, such as power plant outages and weather conditions, to further refine the model’s adaptability to external market shocks.

Acknowledgments

This Research was supported(in part) by Glocal University Project of Mokpo National University in 2025

References

-

R. Weron, "Electricity price forecasting: A review of the state-of-the-art with a look into the future", Int. J. of Forecasting, Vol. 30, No. 4, pp. 1030-1081, Oct. 2014.

[https://doi.org/10.1016/j.ijforecast.2014.08.008]

-

M. Kizildag, F. Abut, and M. F. Akay, "Development of New Electricity System Marginal Price Forecasting Models Using Statistical and Artificial Intelligence Methods", Applied Sciences, Vol. 14, No. 21, pp. 1-24, Nov. 2024.

[https://doi.org/10.3390/app142110011]

-

G. Memarzadeh and F. Keynia, "Short-term electricity load and price forecasting by a new optimal LSTM-NN based prediction algorithm", Electric Power Systems Research, Vol. 192, pp. 1-14, Mar. 2021.

[https://doi.org/10.1016/j.epsr.2020.106995]

-

K. Greff, R. K. Srivastava, J. Koutnik, B. R. Steunebrink, and J. Schmidhuber, "LSTM: A Search Space Odyssey", IEEE Trans. on Neural Networks and Learning Systems, Vol. 28, No. 10, pp. 2222-2232, Oct. 2017.

[https://doi.org/10.1109/TNNLS.2016.2582924]

-

S. Y. Jhin, S. Kim, and N. Park, "Addressing Prediction Delays in Time Series Forecasting: A Continuous GRU Approach with Derivative Regularization", arXiv:2407.01622, , pp. 1234-1245, Jun. 2024.

[https://doi.org/10.1145/3637528.3671969]

-

U. M. Sirisha, M. C. Belavagi, and G. Attigeri, "Profit Prediction Using ARIMA, SARIMA and LSTM Models in Time Series Forecasting: A Comparison", IEEE Access, Vol. 10, pp. 124715-124727, Nov. 2022.

[https://doi.org/10.1109/ACCESS.2022.3224938]

-

A. Wen, T. Zhou, C. Zhang, W. Chen, Z. Ma, J. Yan, and L. Sun, "Transformers in Time Series: A Survey", arXiv:2202.07125, , pp. 1-9, Feb. 2022.

[https://doi.org/10.48550/arXiv.2202.07125]

-

A. Zeng, M. Chen, L. Zhang, and Q. Xu, "Are Transformers Effective for Time Series Forecasting?", Proc. of the AAAI Conf. on Artificial Intelligence, Washington DC, USA., Vol. 37, No. 13, pp. 11121-11128, Feb. 2023.

[https://doi.org/10.1609/aaai.v37i9.26317]

-

W. Yang, S. Sun, Y. Hao, and S. Wang, "A novel machine learning-based electricity price forecasting model based on optimal model selection strategy", Energy, Vol. 238, Part C, pp. 1-14, Jan. 2022.

[https://doi.org/10.1016/j.energy.2021.121989]

-

N. Aslam, F. Rustam, E. Lee, P. B. Washington, and I. Ashraf, "Sentiment Analysis and Emotion Detection on Cryptocurrency Related Tweets Using Ensemble LSTM-GRU Model", IEEE Access, Vol. 10, pp. 39313-39324, Apr. 2022.

[https://doi.org/10.1109/ACCESS.2022.3165621]

-

A. S. Girsang and Stanley, "Hybrid LSTM and GRU for Cryptocurrency Price Forecasting Based on Social Network Sentiment Analysis Using FinBERT", IEEE Access, Vol. 11, pp. 120530-120540, Oct. 2023.

[https://doi.org/10.1109/ACCESS.2023.3324535]

-

G. P. Zhang, "Time series forecasting using a hybrid ARIMA and neural network model", Neurocomputing, Vol. 50, pp. 159-175, Jan. 2003.

[https://doi.org/10.1016/S0925-2312(01)00702-0]

-

H. Liu, H.-Q. Tian, and Y.-F. Li, "Comparison of two new ARIMA-ANN and ARIMA-Kalman hybrid models for wind speed prediction", Applied Energy, Vol. 98, pp. 415-424, Oct. 2012.

[https://doi.org/10.1016/j.apenergy.2012.04.001]

- Korea Power Exchange (KPX), "Hourly System Marginal Price (SMP)", Electric Power Statistics Information System (EPSIS), https://epsis.kpx.or.kr/epsisnew/selectEkmaSmpShdChart.do?menuId=040202, . [accessed: Jan. 10, 2026]

1993. 5 : MS degree, Dept. of Computer Science, University of Massachusetts Lowell

1997. 5 : PhD degree, Dept. of Computer Science, University of Massachusetts Lowell

1997. 7 ~ 1999. 8 : Senior Researcher, Samsung Electronics Telecommunication Research Institute

1999. 9 ~ present : Professor, Dept. of Information Security, Mokpo National University

Research interests : Autonomous Self-Adaptive Learning, Deep Learning Applications, Optimization