LS-based Self-Positioning in GPS-Denied Environment using Stepped-Frequency Radar and CNN-based Target Association

Abstract

This paper proposes a method for self-positioning in Global Positioning System (GPS)-denied environments using stepped-frequency radar and four reference targets. From simulated radar range profiles, an Evolutionary Programming (EP)-based CLEAN algorithm is applied to precisely extract scattering center information, while a convolutional neural network (CNN) classifier distinguishes the reference targets with a recognition accuracy of 98.8%. The identified target information is then used in Least Square (LS) estimation to determine the final position. Monte Carlo simulations conducted 100 times under Signal-to-Noise Ratio (SNR) levels of 15, 10, and 5 dB yielded Root Mean Square Errors (RMSE) of 0.80 m, 0.93 m, and 1.26 m, respectively. These results validate the feasibility of self-positioning in GPS-denied environments using radar and reference targets.

초록

본 논문은 GPS(global positioning system)가 제한된 환경에서 자기 위치 추정을 위해 계단 주파수 레이다와 네 개의 기준 표적을 활용하는 방법을 제안한다. 레이다 측정 시뮬레이션을 통해 얻은 range profile에서 EP(evolutionary programming)-based CLEAN 알고리즘을 적용하여 산란 중심 정보를 정밀하게 추출하고, 동시에 CNN(convolutional neural network) 분류기를 통해 기준 표적 구분하여 98.8%의 인식 정확도를 달성하였다. 식별된 표적 정보에 최소자승법을 적용해 최종적으로 위치를 추정하였다. SNR(signal-to-noise ratio) 15, 10, 5 dB 환경에서 100회 몬테카를로 시뮬레이션을 수행한 결과 RMSE(root mean square error)는 각각 0.80 m, 0.93 m, 1.26 m로 나타났다. 이를 통해 GPS가 제한된 환경에서도 레이다와 기준 표적을 이용한 자기 위치 추정의 가능성을 입증하였다.

Keywords:

GPS-denied, stepped-frequency radar, CNN, EP-based CLEAN, LS estimationⅠ. Introduction

Global Positioning System (GPS) technology is widely used in both military and civilian applications. However, GPS has limitations in accurately estimating positions indoors and in urban canyons, and it is susceptible to external interference such as GPS jamming and spoofing in military environments [1][2]. To address these challenges, various studies have been conducted to estimate positions in GPS-denied environments [3][4]. Currently, several technologies are utilized for position estimation, including vision-based [5][6], ultrasonic, LiDAR, and radar methods. The vision-based method, which relies on cameras to capture images and estimate positions through image processing techniques, is mainly used outdoors and offers high accuracy but may degrade under poor weather or low-light conditions. The ultrasonic method, using sensors to emit sound waves and measure the time until they reflect back, is primarily used indoors, providing cost-effective and stable position estimation, although it may not be ideal for high-precision location tracking [7]. The LiDAR-based method scans the surrounding environment using either a rapidly rotating mirror or multiple laser emitters. It measures the time taken for the laser beams to reflect off objects and return, thereby calculating the distance. This data enables the generation of a 3D point cloud, leading to precise estimation of the target’s location on a high resolution 3D map [8][9]. It can scan the environment swiftly using light and remains unaffected by weather conditions or environmental changes. However, LiDAR technology is expensive and requires specialized maintenance. Similar to LiDAR, the radar method can accurately estimate positions in various environments and delivers consistent performance regardless of weather conditions or time of day [10]-[12]. However, high resolution position estimation necessitates a wide bandwidth, and there is a requirement to process the detected data. Consequently, this study proposes a self-positioning method using stepped-frequency radar, which achieves high-resolution range profiles through a wide bandwidth. Stepped-frequency radar employs a broad frequency range to gather detailed information on the range of targets. High-resolution range profiles display the reflected signal strength as a function of time or distance, and stepped-frequency radar provides this information with high resolution.

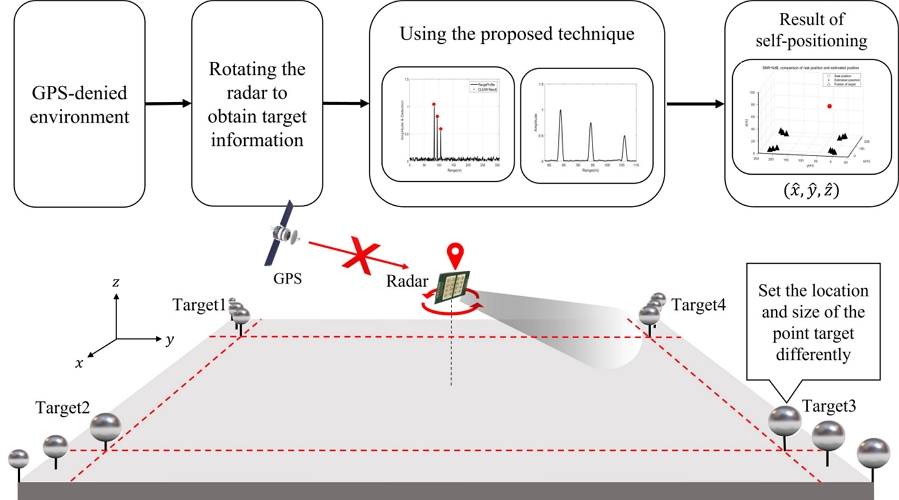

By using the Evolutionary Programming (EP)-based CLEAN algorithm [13], we can accurately determine the range information between the moving vehicle and the targets. Additionally, by training a Convolutional Neural Network (CNN) classifier with the obtained range profiles, we can discern the information of the targets designated for position estimation. To estimate the position of the moving vehicle using the Least Square (LS) estimation method, it is necessary to ascertain the range information between the targets and the moving vehicle, as well as the positions and identification numbers of the targets. The target identification number is obtained through the classifier, and the positions of the targets are predefined by the user. Consequently, we confirmed that we could estimate the position of the moving vehicle by applying the range information derived from the EP-based CLEAN algorithm and the target identifiers obtained through the CNN classifier to the LS method. To demonstrate the proposed method, we developed a simulation environment and implemented the aforementioned algorithms to assess the difference between the set positions and the estimated positions. The Root Mean Square Error (RMSE) was employed as the validation method to confirm the effectiveness of the approach proposed in this paper. Fig. 1 illustrates the conceptual operation of the method discussed in this study.

Ⅱ. Self-positioning algorithm

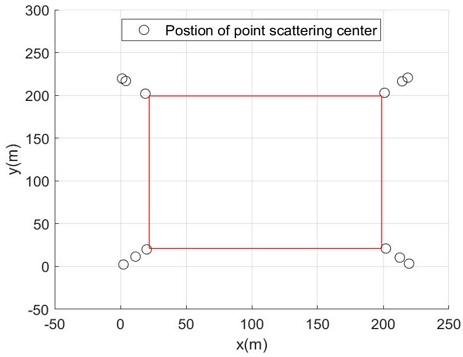

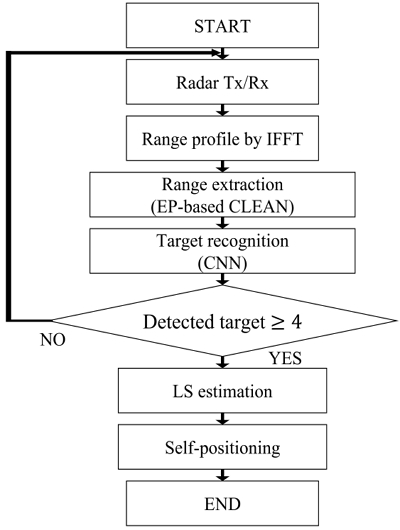

Before explaining the self-positioning algorithm, we make the following assumptions. First, the moving vehicle is within a position estimable range defined by a rectangle, as illustrated in Fig. 2. The rectangle is formed by placing targets at its vertices, and within this rectangle, positioning estimation is feasible. Each target consists of various scattering centers. Secondly, a stepped-frequency radar mounted on the moving vehicle rotates to collect the necessary data for positioning estimation. Fig. 3 shows a block diagram of the proposed algorithm. The LS estimation method is employed for self-positioning. To apply the LS estimation method, two preliminary steps are required:

First, the range profile is obtained by performing an Inverse Fast Fourier Transform (IFFT) on the data collected by the radar. Next, the precise range is extracted using the EP-based CLEAN method. For target recognition, CNN classification is utilized. Once these preliminary steps are successfully completed and four targets are detected, self-positioning using LS estimation can be performed.

2.1 LS estimation

In this section, a mathematical interpretation of the LS estimation is presented. As initially assumed, the radar mounted on the moving vehicle is rotated, and data are obtained from the targets. Through this process, the range between the targetsand the radar is obtained. Additionally, since the user predetermines the target positions, these positions are already known. Consequently, the Formula of the line can be formulated as follows:

| (1) |

| (2) |

| (3) |

| (4) |

d1, d2, d3, d4 denote the distances between each target and the radar, respectively. For each target, the range used is the distance to the nearest scattering center of the radar. xn, yn, zn denote the 3D coordinates of the targets, where n = 1, 2, 3, 4indicates the number of targets. x, y, z are the coordinates of the radar that need to be estimated. (1) to (4) can be transformed into a matrix form as shown in (5) through the process described in [14]-[16].

| (5) |

| (6) |

| (7) |

| (8) |

In (5), X, t, b are expressed as (6) to (8), respectively. Here, (7) denotes the 3D position information of the moving vehicle with the radar. By using (6) and (8) in matrix form, we can perform LS estimation to estimate the position of the moving vehicle. The LS estimation method is summarized as follows [14]-[18]:

| (9) |

The estimated 3D position information of the moving vehicle is denoted as . Therefore, the coordinates of the moving vehicle can be estimated using LS estimation. However, if the distance information d1, d2, d3, d4 between the moving vehicle and the targets, which is used for LS estimation, is inaccurate, increased estimation errors may result. Therefore, the extraction of accurate range information is essential. Correctly identifying the target that generated each radar measurement is also crucial. Otherwise, a mismatch between the range information and the targets can occur, leading to inaccurate position estimation. To address these issues, two preliminary steps are conducted prior to accurate LS estimation. The EP-based CLEAN method is employed to extract range information and pinpoint each target’s scattering center. Next, a CNN classifier is used to associate each range profile with its corresponding target. The following section explains the EP-based CLEAN method and CNN in detail.

2.2 EP-based CLEAN

The EP-based CLEAN algorithm, which was previously used in [13], is applied to accurately extract range information between the moving vehicle and point targets. EP is a computational technique that solves optimization problems by emulating biological evolutionary principles. The process begins with the random generation of initial candidates, each representing a potential solution. These candidates are evaluated for fitness, after which those with high fitness are mutated to preserve diversity and avoid local minima. The fitness of the mutated individuals is then reassessed, and selections are made for the next generation. This iterative process is repeated for a specified number of generations to obtain the optimal solution. This method is generally considered more effective than gradient descent techniques for finding a global optimum. By using this approach, the optimal range and amplitude can be precisely determined from the radar data. EP-based CLEAN is advantageous for its fast, step-by-step extraction method, unlike approaches that attempt to extract all parameters simultaneously. Since CLEAN assumes an undamped exponential model, it is applied in the high-frequency band. The equation below mathematically represents the signal received by a stepped-frequency radar [13]:

| (10) |

The detailed process of the EP-based CLEAN algorithm, as proposed in [13], is summarized as follows. Let M is the number of scattering centers to be estimated, and Q is the number of frequency samples. The received signal of the steppedfrequency radar is denoted as Em (fq), where m indicates the iteration step, fq is the q-th frequency sample and c is the speed of light. In each iteration, the amplitude am and range Rm of the m-th scattering center are estimated by minimizing the cost function Jm using EP.

| (11) |

The contribution of the identified scattering center is then removed from the received signal to compute the residual:

| (12) |

This procedure is repeated until all M scattering centers are extracted. The method enables more accurate estimation of range and amplitude from radar received signals by isolating the influence of each scattering center.

2.3 Target recognition

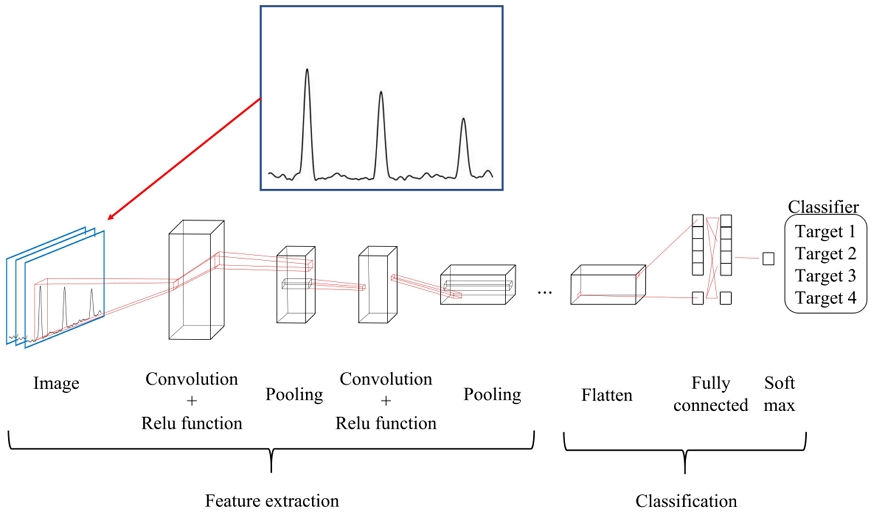

To estimate the position of a moving vehicle using LS estimation, it is essential to identify the target from which the measured range was obtained. However, since the signals received by the rotating radar do not indicate the identity of the target, target recognition becomes necessary. A CNN classifier was trained to recognize each target using its respective range profile [19]-[21]. A CNN is a multilayer neural network designed to classify image or video data which shows outstanding performance in various fields such as image recognition, classification, object detection, and video analysis. As shown in Fig. 4, a typical CNN architecture consists of an input layer, convolutional layers, a ReLU function, pooling layers, fully connected layers, and an output layer. The input layer typically has a grid-like structure and is represented as a matrix of pixel values. The convolutional layer, a core component of CNNs, applies convolution operations to the input data to generate feature maps.

These operations involve sliding small kernels over the input data to perform element-wise multiplication and summation, thereby emphasizing specific image features. Kernels are small matrices used across the entire input to detect a variety of features. The ReLU function, the most commonly used activation function, introduces non-linearity into the model after each convolution operation. Its output equals the input when positive and is zero when negative, enabling the network to capture complex patterns. The pooling layer reduces the dimensionality of feature maps, enhancing computational efficiency and preventing overfitting. In this study, max pooling was used. The fully connected layer processes high-dimensional data that have passed through multiple convolutional and pooling layers, with neurons connected to all activation functions in the preceding layers, similar to traditional artificial neural networks. The final fully connected layer outputs the probability for each class in classification tasks by applying an activation function such as softmax to convert the network’s output into probabilities.

The CNN network architecture used in this paper consists of a total of 14 layers, and its detailed structure follows a typical CNN design in which 2D convolution–batch normalization–ReLU–MaxPooling blocks are stacked sequentially as shwon in Table 1, followed by a fully connected layer and a classification layer. The network was trained using the Stochastic Gradient Descent with Momentum (SGDM) optimizer, with an initial learning rate of 0.001, 5 epochs, and a minimum batch size of 4. During the training, the data were shuffled at every epoch, and validation accuracy was evaluated every 10 steps using the validation dataset.

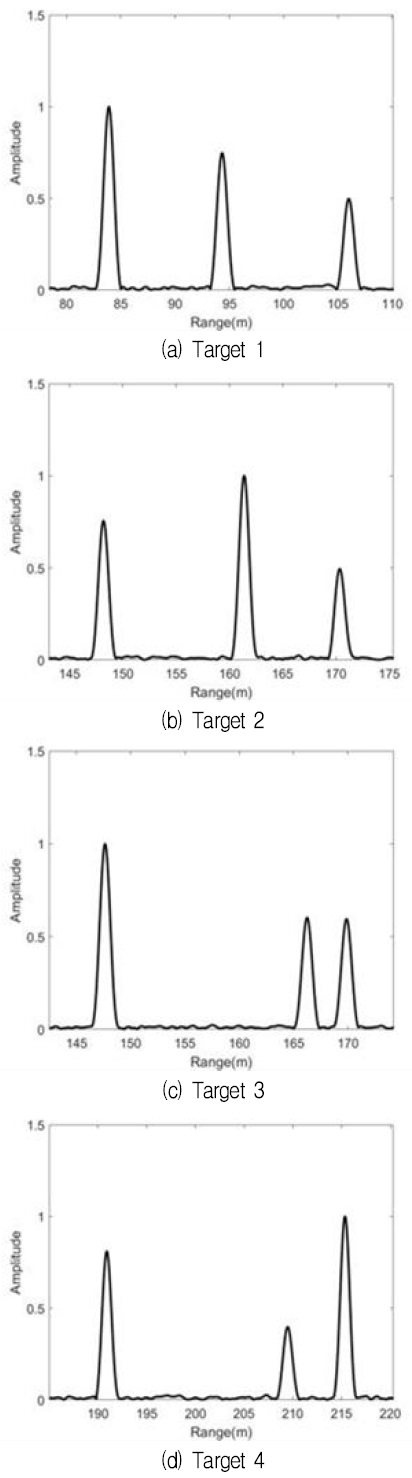

CNNs autonomously learn features from input images and hierarchically combine these features to recognize high-level patterns. In this study, distinct features were generated for each reference target to enable CNNs to effectively learn and recognize the range profile characteristics of each target. These features are presented in Table 2. The reference targets, each composed of three point scatterers, differ in the distances between the scatterers and in their amplitudes. These characteristics are clearly reflected in the range profiles. The distance between the first and second point scatterers is denoted as d1to2, and the distance between the second and third point scatterers is denoted as d2to3. The amplitudes of the respective point scatterers are set as amplitude 1, amplitude 2, and amplitude 3, which correspond to am in (10).

To train the CNN classifier, training data were collected from various locations. Since the distances between scattering centers in the range profile vary with the radar’s position, it was important that the model be trained using data measured from multiple locations. The training data were obtained by moving the vehicle at regular intervals within the position estimation range. By acquiring range profiles from various locations, the reliability of the classifier for target recognition was improved. In the range profiles used for target recognition, if the distances between scattering centers are too short, the centers may appear to overlap, which can result in inaccurate recognition. Therefore, a window function that includes only the section from 5 m before the first scattering center to 5 m after the last scattering center of the target was applied for training.

By focusing solely on the section around the scattering centers in the range profile, overlapping can be minimized even when the scattering centers are closely placed. This approach not only enhances the performance of target recognition but also provides spatial efficiency, as the scatterers do not need to be installed at wide intervals. A rectangular window function was employed for this purpose. The range profiles obtained for each target using this method were classified using a CNN. Fig. 5 shows the range profiles used for training. As seen in Fig. 5, the distance and amplitude between scattering centers differ for each target, indicating that each target has unique features in its range profile. Therefore, it is possible to determine from which target the range information originated based on the range profile.

Ⅲ. Simulation and result

The received signals of the stepped-frequency radar, including the radar’s position as well as the position and amplitude of each target for the simulation, were configured using MATLAB. The stepped-frequency radar was set to start at 24 GHz, with a bandwidth of 250 MHz, and the number of frequency samples was fixed at 512. The resolution of the range profile used in the simulation is approximately 0.6 m. Before proceeding with the simulation, it is essential to correctly set the parameters of the EP-based CLEAN algorithm. The performance of the algorithm is optimized based on the values of its parameters, which include population, generation, and mutation rate.

The population refers to the number of individuals initially generated, generation refers to the number of iterations needed for optimization, and the mutation rate determines the level of variation applied when generating new individuals. A high mutation rate introduces more variation, helping to avoid local minima, but excessively high rates may prevent convergence and mimic a random search. Therefore, it is crucial to find an appropriate balance. The parameter values used for the EP in this study are presented in Table 3.

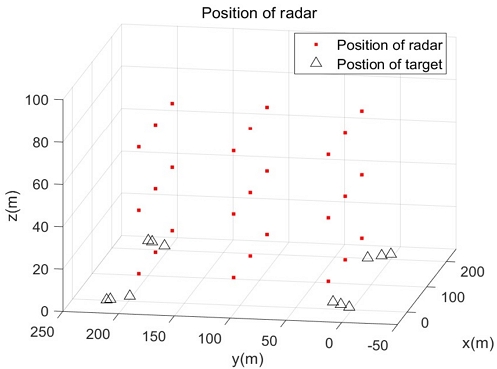

When the parameter values for the EP-based CLEAN algorithm increase, this leads to increased computation time. To solve this issue, the EP-based CLEAN algorithm was applied only around the scattering centers. The raw data received by the radar were processed using IFFT, and the maximum values were identified according to the number of scattering centers, allowing the approximate locations of these centers to be estimated. By generating initial candidates only around these locations, the range to the scattering centers could be determined with fewer populations and generations, thereby reducing computation time. In this paper, for a single range profile, the average execution time of one EP-based CLEAN algorithm to extract all three scattering centers was 3.025[s] (measured 30 times in the environment: Intel i5-13400, 16 GB RAM, MATLAB R2023b, without GPU). For LS estimation, the locations and sizes of the targets were pre-set, allowing the total number of scattering centers, denoted as M, to be determined. As mentioned earlier, the radar rotates while measuring the targets, and each target is implemented with a total of three point scattering centers. The point scattering centers were modeled as spherical reflectors to ensure consistent data acquisition from any direction. The four targets, each consisting of three point scattering centers, are positioned at the corners of a square, as illustrated in Fig. 2. The interior of the square indicates the range within which position estimation is feasible. To introduce variations in the range profiles, the positions and sizes of the scattering centers were adjusted for each target.

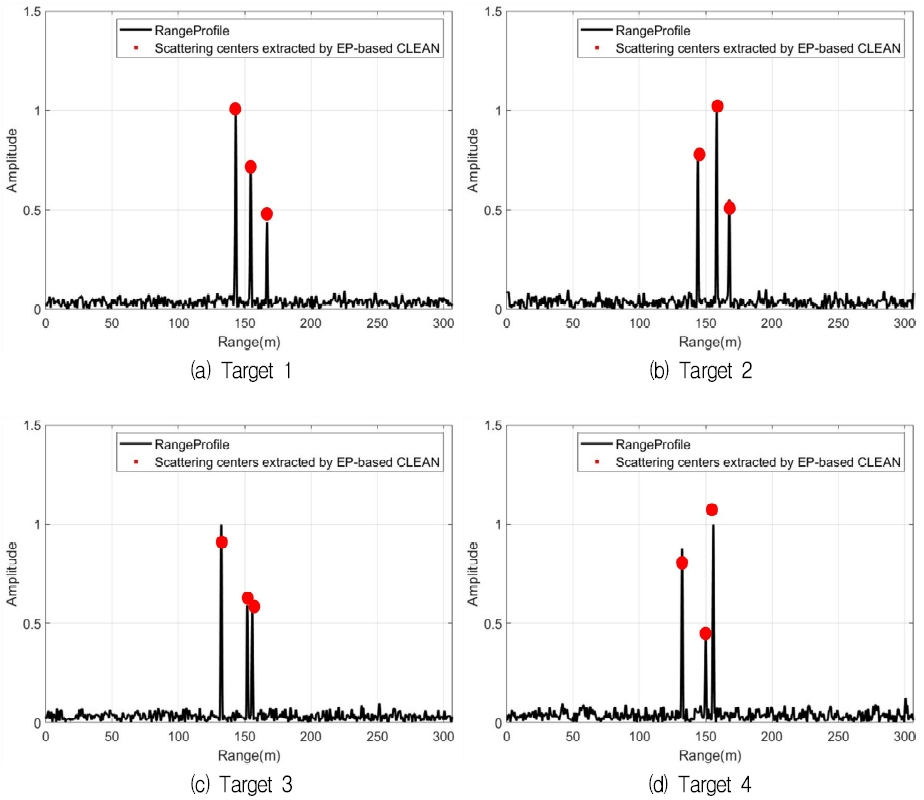

Fig. 6 shows the range profiles generated using radar signals received from each target, along with the amplitude and range information of the scattering centers extracted via the EP-based CLEAN algorithm. In the figure, the red dots represent the range and amplitude of the scattering centers. The range profiles were normalized such that the maximum amplitude was scaled to 1. Although the EP-based CLEAN algorithm successfully extracted amplitude and range information, it was still unclear which specific target each set of range data corresponded to. Therefore, the CNN described earlier was employed for additional target identification.

To evaluate the performance of the CNN, the dataset was divided into training and test data in a 7:3 ratio, and the accuracy was assessed. A total of 5,968 data samples were collected, of which 4,176 were used for training and 1,792 for testing. As shown in Table 4, a confusion matrix was generated to calculate the accuracy. Ultimately, an accuracy of 98.83% was achieved. This confirms that target recognition using the range profile is effective. Next, LS estimation was performed using data obtained by integrating the EP-based CLEAN algorithm with the CNN. To ensure the robustness and reliability of the results, simulations were conducted with the radar placed at various positions.

Fig. 7 illustrates the radar positions used in the simulation, indicated by red dots. To further evaluate robustness in noisy conditions, the SNR was set to 15 dB, 10 dB, and 5 dB, respectively. At each position and SNR level, 100 Monte Carlo simulations were performed. The probabilistic nature of the Monte Carlo method allowed for the incorporation of variability and uncertainty, enabling a more thorough and comprehensive analysis. RMSE was used to evaluate the algorithm proposed in this study. The formula for RMSE is as follows:

| (13) |

| (14) |

Here, error denotes the distance between the estimated and actual locations, and K denotes the total number of iterations, which was set to 100 for this experiment. Table 5 presents the results of verifying position estimation accuracy using RMSE. It shows the average RMSE values calculated by aggregating the RMSE results for each SNR level across all radar positions.

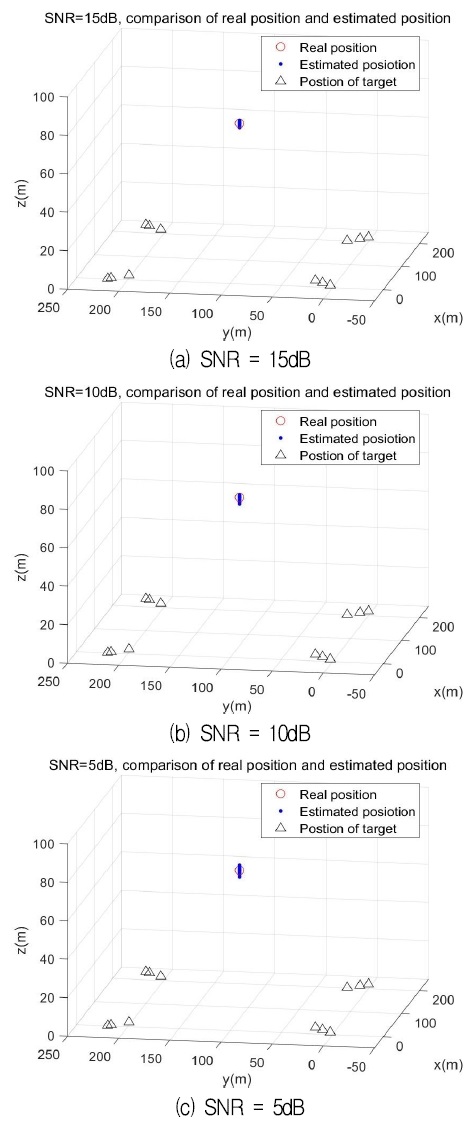

The average RMSE values for SNRs of 15 dB, 10 dB, and 5 dB are 0.7990 m, 0.9254 m, and 1.2624 m, respectively. The cases with 15 dB and 10 dB demonstrated an average accuracy within 1 m, while the 5 dB case showed an average accuracy exceeding 1 m. When examining the RMSE at each location, it was observed that the RMSE increased as the distance between the targets decreased. This is attributable to the closer appearance of point scattering centers in the range profile when the distance between the radar and the targets diminishes, thus reducing accuracy during the range extraction phase. Additionally, simulations were conducted by changing the position estimable area from the original rectangular shape to a pentagonal shape. For the pentagon, the average RMSE values for each SNR were 0.7922 m, 0.9262 m, and 1.2737 m, respectively. Therefore, in this study, the rectangular position-estimable area was adopted, as the difference in accuracy between the pentagonal and rectangular areas was minimal, and the rectangular shape requires fewer target recognition and range extraction computations.

Consequently, consistently low RMSE values were observed at coordinates near the center of the estimable area, specifically at (110, 110, 10), (110, 110, 40), and (110, 110, 70). Fig. 8 presents the estimated radar positions at different SNR levels, where the red circle indicates the true radar position, and the blue dots denote the estimated positions. As shown in the figure, the estimated positions exhibit minimal error in the horizontal plane, with only slight variations in altitude. Results show that errors in the horizontal (X–Y) plane are generally small with negligible bias, whereas only the vertical (Z) component exhibits relatively larger variability. This behavior is explained by Vertical Dilution of Precision (VDOP) [22][23]. Because the reference targets are arranged on the same plane, sensitivity to the vertical component is reduced and even small range errors are amplified along the Z-axis. The consistently small horizontal errors confirm that the proposed algorithm accurately estimates the radar’s position.

Ⅳ. Conclusion

This paper proposes an LS-based self-positioning method using stepped-frequency radar and reference targets. The approach combines EP-based CLEAN for accurate range extraction and a CNN classifier for target identification, achieving 98.83% classification accuracy. LS estimation was then performed under various SNR conditions, with 100 Monte Carlo simulations for each case. The results show average RMSE values of 0.7990 m, 0.9254 m, and 1.2624 m for SNRs of 15, 10, and 5 dB, respectively, demonstrating the effectiveness of the proposed algorithm in accurately estimating the radar’s position. Future work will focus on extending the method using alternative optimization techniques and validating the algorithm through experimental measurements with actual radar systems.

Acknowledgments

This research was supported by the Regional Innovation System & Education(RISE) program through the Daejeon RISE Center, funded by the Ministry of Education(MOE) and the Daejeon Metropolitan City, Republic of Korea(2025-RISE-06-013). This work was supported by Institute of Information & communications Technology Planning & Evaluation (IITP) grand funded by the Korean Government (MSIT)(RS-2023-00260829 Development of automatic measurement·analysis·modeling technology for in-building 3D propagation characteristics).

References

-

N. Dilshad, A. Ullah, J. H. Kim. and J. W. Seo, "LocateUAV: Unmanned Aerial Vehicle Location Estimation Via Contextual Analysis in an IoT Environment", IEEE Internet of Things Journal, Vol. 10, No. 5, pp. 4021-4033, Mar. 2023.

[https://doi.org/10.1109/JIOT.2022.3162300]

-

S. Rezwan and W. Y. Choi, "Artificial Intelligence Approaches for UAV Navigation: Recent Advances and Future Challenges", IEEE Access, Vol. 10. pp. 26320-26339, Mar. 2022.

[https://doi.org/10.1109/ACCESS.2022.3157626]

-

N. Gyagenda, J. V. Hatilima, H. Roth, and V. Zhmud, "A review of GNSS-independent UAV navigation techniques", Journal of Robotics and Autonomous Systems, Vol. 152, Jun. 2022.

[https://doi.org/10.1016/j.robot.2022.104069]

-

Y. Chang, Y. Cheng, U. Manzoor, and J. Murray, "A review of UAV Autonomous Navigation in GPS-denied Environments", Journal of Robotics and Autonomous Systems, Vol. 170, Dec. 2023.

[https://doi.org/10.1016/j.robot.2023.104533]

-

Y. Lu, Z. Xue, G.-S. Xia, and L. Zhang, "A survey on vision-based UAV navigation", Journal of Geo-Spatial Information Science, Vol. 21, No. 1, pp. 21-32, Dec. 2023.

[https://doi.org/10.1080/10095020.2017.1420509]

-

Y. Alkendi, L. Seneviratne, and Y. Zweiri, "State of the Art in Vision-Based Localization Techniques for Autonomous Navigation Systems", IEEE Access, Vol. 9, pp. 76847-76874, May 2021.

[https://doi.org/10.1109/ACCESS.2021.3082778]

-

D. Esslinger, M. Oberdorfer, M. Zeitz, and C. Tarin, "Improving ultrasound-based indoor localization systems for quality assurance in manual assembly", Proc. 2020 IEEE International Conference on Industrial Technology (ICIT), Buenos Aires, Argentina, Feb. 2020.

[https://doi.org/10.1109/ICIT45562.2020.9067248]

-

F. Caballero and L. Merino, "DLL: Direct LIDAR Localization. A map-based localization approach for aerial robots", Proc. 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, Sep. 2021.

[https://doi.org/10.1109/IROS51168.2021.9636501]

-

R. W. Walcott and R. M. Eustice, "Fast LIDAR localization using multiresolution Gaussian mixture maps", Proc. 2015 IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, May 2015.

[https://doi.org/10.1109/ICRA.2015.7139582]

-

H. Godrich, A. M. Haimovich, and R. S. Blum, "Target Localization Accuracy Gain in MIMO Radar-Based Systems", IEEE Transactions on Information Theory, Vol. 56, No. 6, pp. 2783-2803, Jun. 2010.

[https://doi.org/10.1109/TIT.2010.2046246]

-

E. Dawson, M. A. Rashed, W. Abdelfatah, and A. Noureldin, "Radar-Based Multisensor Fusion for Uninterrupted Reliable Positioning in GNSS-Denied Environments", IEEE Transactions on Intelligent Transportation Systems, Vol. 23, No. 12, pp. 23384-23398, Sep. 2022.

[https://doi.org/10.1109/TITS.2022.3202139]

-

W. Li, R. Chen, Y. Wu, and H. Zhou, "Indoor positioning system using a single-chip millimeter wave radar", IEEE Sensors Journal, Vol. 23, No. 5, pp. 5232-5242, Mar. 2023.

[https://doi.org/10.1109/JSEN.2023.3235700]

-

I. S. Choi and H. T. Kim, "One-dimensional evolutionary programming-based CLEAN", Electronics Letters, Vol. 37, No. 6, pp. 400-401, Mar. 2001.

[https://doi.org/10.1049/el:20010259]

- J. H. Kim, H. S. Nam, and I. S. Choi, "Self-positioning method using Stepped-frequency Radar and Point target", Proc. of 2024 KICS Winter Conference, Pyeongchang, South Korea, pp. 1138-1139, Jan. 2024.

-

I. S. Choi and J. H. Kim, "3D Self-positioning Method Using Stepped-Frequency Radar and MMSE Estimator", Proc. of Innovative Computing, pp. 283-287, Jun. 2024.

[https://doi.org/10.1007/978-981-97-4182-3_36]

- J. H. Kim, S. W. Oh, and I. S. Choi, "Self-Position Estimation Technique using PSO in GPS-denied Environment", Proc. of 2025 KIIT Summer Conference, pp. 88-89, Jeju, South Korea, Jun. 2025.

-

Y. Cheng and T. Zhou, "UWB indoor positioning algorithm based on TDOA technology", Proc. 2019 10th International Conference on Information Technology in Medicine and Education (ITME), Qingdao, China, Aug. 2019.

[https://doi.org/10.1109/ITME.2019.00177]

-

J. H. You and Y. G. Kwon, "Localization algorithm by using location error compensation through topology constructions", Journal of the Korea Institute of Information and Communication Engineering, Vol. 18, No. 9, pp. 2243-2250, Sep. 2014.

[https://doi.org/10.6109/jkiice.2014.18.9.2243]

-

Y. B. Kim, Y. M. Kim, and I. S. Choi, "State classification of wind turbine blade using convolutional neural network", Journal of Korean Institute of Information Technology, Vol. 20, No. 4, pp. 89-97, Apr. 2022.

[https://doi.org/10.14801/jkiit.2022.20.4.89]

-

Z. Liu, M. Waqas, J. Yang, A. Rashid, and Z. Han, "A Multi-Task CNN for Maritime Target Detection", IEEE Signal Processing Letters, Vol. 28, pp. 434-438, Feb. 2021.

[https://doi.org/10.1109/LSP.2021.3056901]

-

H. Bi, J. Deng, T. Yang, and L. Wang, "CNN-Based Target Detection and Classification When Sparse SAR Image Dataset is Available", IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, Vol. 14, pp. 23-24, Jun. 2021.

[https://doi.org/10.1109/JSTARS.2021.3093645]

-

L. C. Chu, P. H. Tseng, and K. T. Feng, "GDOP-Assisted Location Estimation Algorithms in Wireless Location System", IEEE GLOBECON 2008, New Orleans, USA, pp. 1-5, Dec. 2008.

[https://doi.org/10.1109/GLOCOM.2008.ECP.1032]

-

K. H. Kim, S. W. Lee, B. S. Hwang, J. H. Seon, J. W. Kim, J. H. Kim, S. H. Kim, and J. Y. Kim, "Research on Improvement of GPS Altitude Accuracy in NLOS Environment by Applying VDOP-Based Weighting", The Journal of IIBC, Vol. 24, No. 6, pp. 51-56, Dec. 2024.

[https://doi.org/10.7236/JIIBC.2024.24.6.51]

2018. 3 ~ 2024. 2 : BS degree, Dept. of Electrical and Electronic Engineering, Hannam University

2024. 3 ~ Present : MS candidate, Dept. of Electrical and Electronic Engineering, Hannam University

Research Interests : Radar Signal Processing, RCS Analysis, Radio Propagation Environment Analysis

1998. 2 : BS degree, Dept. of Electronic Engineering, Kyungpook National University

2000. 2 : MS degree, Dept. of Electrical and Electronic Engineering, POSTECH

2003. 3 : Ph.D. degree, Dept. of Electrical and Electronic Engineering, POSTECH

2003. 4 ~ 2004. 1 : Senior Research Engineer, LG Electronics

2004. 2 ~ 2007. 2 : Senior Research Engineer, Agency for Defense Development (ADD)

2007. 3 ~ Present : Professor, Dept. of Electrical and Electronic Engineering, Hannam University

Research interests : Radar Signal Processing, Radar System Design, RCS Analysis, EMI/EMC Analysis

1996. 2 : BS degree, Dept. of Electric Engineering, Naval Academy

2002. 2 : MS degree, Dept. of Computer & Communications Engineering, POSTECH

2011. 8 : Ph.D. degree, Dept. of Electronical Engineering, Texas A&M University, USA

2011. 3 ~ 2019. 2 : Navy Engineering Officer, Navy & ADD

2019. 3 ~ Present : Associate Professor, Division of Naval Officer Science, Mokpo National Maritime University

Research interests : Radar System Design, Antenna, RCS, Radio Propagation Environment Analysis

2009. 8 : BS degree, Dept. of Electronic Engineering, Hannam University

2011. 8 : MS degree, Dept. of Computer & Information Engineering, Inha University

2014. 8 : MBA degree, Graduate School of Industrial & Entrepreneurial Management, Chung-Ang University

2018. 2 : Ph.D. candidate, Dept. of Computer Science and Engineering, Korea University

2011. 7 ~ 2017. 12 : SW Research Engineer, Samsung Electronics

2018. 1 ~ Present : Senior Researcher, National Security Research Institute (The Affiliated Institute of ETRI)

Research interests : Cyber Security, Digital Twin Systems, AI-based Threat Modeling, Blockchain