Classification of ISAR Images using 2D PCA and Neural Network Classifier

Abstract

We propose an efficient method for classifying Inverse Synthetic Aperture Radar (ISAR) images with robustness to both translation and rotation. To achieve rotational invariance, we estimate the Relative Rotation Angle (RRA) by applying a two-Dimensional (2D) Fourier Transform (FT) followed by polar mapping of the spectral magnitude. The angle that maximizes the correlation between the polar-mapped spectra of the training and test images is selected as the RRA. The test image's 2D FT spectrum is then rotated by the estimated RRA, and a 2D inverse FT is performed to restore spatial domain data with corrected orientation. Classification is subsequently conducted using two-Dimensional Principal Component Analysis (2D-PCA) and a simple neural network classifier with two hidden layers. The proposed method demonstrates high classification accuracy under low signal-to-noise ratio conditions, even with a limited training dataset, and shows greater resilience to image defocus compared to conventional techniques.

초록

본 논문에서는 병진 및 회전에 대한 불변성을 갖는 역합성 개구면 레이더(ISAR, Inverse Synthetic Aperture Radar) 영상 식별을 위한 효과적인 방법을 제안한다. 제안된 방법은 학습 영상과 시험 영상 간의 상대 회전각을 추정하기 위해 ISAR 영상에 대한 이차원 푸리에 변환을 수행한 후, 해당 스펙트럼을 극좌표계로 사상한다. 학습 영상과 시험 영상의 사상 영상 간 상관계수가 최대가 되는 각도를 회전각으로 설정한 후, 시험 영상의 2D 푸리에 스펙트럼을 회전각 만큼 회전시키고, 이를 이차원 역푸리에 변환하여 회전에 대한 불변성을 확보한다. 영상 식별은 이차원 주성분 분석과 2개 은닉층의 간단한 신경망 분류기를 통해 수행된다. 제안된 방법은 적은 수의 학습 데이터와 낮은 신호대잡음비 환경에서도 높은 식별 정확도를 나타내며, 기존 방법에 비해 영상의 흐림 현상에 덜 민감한 특성을 보인다.

Keywords:

ISAR, radar imaging, radar target recognition, 2D PCAⅠ. Introduction

Inverse Synthetic Aperture Radar (ISAR) imaging is a powerful technique for generating high-resolution two-Dimensional (2D) images of non-cooperative moving targets [1]. Owing to its ability to produce detailed 2D representations, ISAR has been widely adopted in various military applications [2][3]. In this work, we focus on leveraging ISAR images for the identification of unknown targets.

For successful classification of unknown ISAR images, it is essential that the classification process remains robust to variations in image orientation and cross-range scale. These variations primarily arise due to the target's motion. While the relative angular motion between the target and the radar enhances cross-range resolution in ISAR imaging, it also introduces variability in the orientation of the resulting 2D ISAR images within the image plane [4][5]. Such inconsistencies can significantly degrade classification performance if not properly addressed.

Another challenge in achieving successful ISAR image classification lies in the high dimensionality of feature vectors extracted from ISAR images. When the feature space is large, classifiers—whether statistical or neural network-based—must adopt complex structures to perform effectively [4]. This complexity not only increases computational cost but also raises the risk of overfitting, particularly when the training dataset is limited. To address this, it is desirable to reduce the dimensionality of ISAR image data. A common approach involves extracting representative features that capture local and geometric characteristics of the image, such as statistical moments and contrast [4]. Although this reduces computational burden and facilitates the design of simpler classifiers, it may also result in the loss of discriminative information that is critical for accurate target identification.

We previously proposed an efficient classification algorithm for ISAR images that is invariant to both translation and rotation [5]. The method applies a 2D Fourier Transform (FT) to the image and performs Polar Mapping (PM) of the FT spectrum, converting rotational differences into translational shifts along the angular axis. Classification is then carried out by correlating the polar-mapped test image with each training image across angular shifts, assigning the class with the highest correlation score. Although effective for handling rotation, this approach is sensitive to noise due to the normalization applied along the radial direction. Since low-frequency components dominate the 2D FT spectrum, normalization disproportionately amplifies high-frequency noise, which can degrade classification performance, especially at low Signal-to-Noise Ratios (SNRs) or with defocused ISAR images.

In this paper, we propose an efficient classification method for ISAR images that is invariant to translation and rotation. The method estimates the rotation angle by maximizing the correlation between polar-mapped 2D FT spectra of the training and test images [5]. The test image’s FT spectrum is then rotated and converted back to the image domain via inverse FT. Translational differences are corrected using Center-of-Mass (COM) alignment. To reduce dimensionality and enhance classification performance, 2D Principal Component Analysis (PCA) is applied [6], followed by classification using a deep neural network. Experimental results using ISAR images obtained from measured Radar Cross-Section (RCS) data show improved classification accuracy at low SNRs and robustness to image defocus.

Ⅱ. Algorithm description

2.1 Preprocessing

As in [5], this paper utilizes the key property of 2D FT: translation and rotation in the spatial (image) domain correspond to phase modulation and rotation in the frequency domain, respectively. In this paper, we omit the proof of this characteristic (see [5] for the detailed procedure). This characteristic means a rotation by an angle θa in the spatial domain corresponds to a rotation by the same angle in the frequency domain (u, v), centered at the zero-frequency point, which serves as the Rotation Center (RC). This property is exploited to estimate the Relative Rotation Angle (RRA) between the test and training ISAR images.

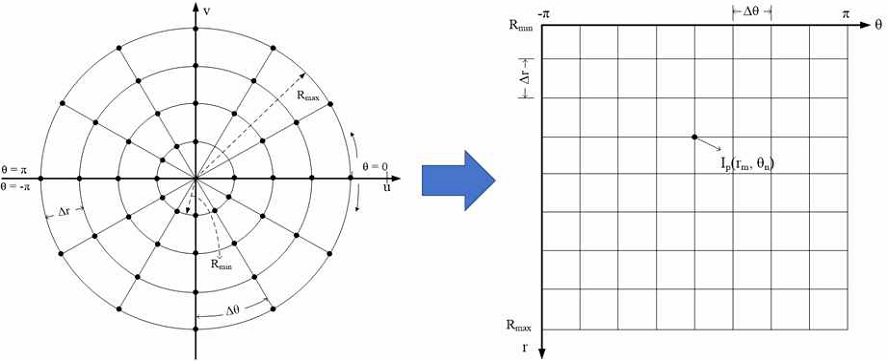

Because the center of rotation is at (0, 0) Hz in 2D FT spectrum, rotation in the (𝑢,𝑣) domain can be converted into translation along the angular axis 𝜃 by polar mapping the spectrum onto the (𝑟, 𝜃) domain (Fig. 2) [5]. Using the polar grid (Fig. 1), the polar-mapped image is resampled as Ip(rm, θn), m = 1, 2, …, Nr, n = 1, 2, …, Nθ, Here, Nr and Nθ denote the number of sampling points in the radial and angular directions, respectively. The polar coordinates (𝑟, 𝜃) to (𝑢, 𝑣) by:

| (1) |

where rm = Rmin + (m - 1)Δr, θn = -π(n - 1)Δθ, and Δr = (Rmax - Rmini)/(Nr - 1), Δθ = 2π/(Nθ - 1), and (uc, vc) are the center position of the PM, Rmax and Rmin are the radius of the largest and the smallest sampling circles, respectively.

2.2 2D Principal component analysis

Since the Eigenfaces method was proposed in 1991 [7], PCA has become a widely used technique in both face recognition [8] and radar target classification [9].

In conventional PCA, however, 2D images must be reshaped into one-Dimensional (1D) vectors to compute the covariance matrix, resulting in a high-dimensional feature space.

To address this limitation, two-dimensional PCA (2D PCA) was proposed and successfully applied to face recognition tasks [6]. In this paper, 2D PCA is employed to reduce the dimensionality of ISAR images.

2D PCA finds a q-dimensional column vector X to project a p-by-q image A as follows:

| (2) |

where Y is the p-dimensional projected vector. For this purpose, the image covariance (scatter) matrix is defined as

| (3) |

where K is the number of training images, Aj is the jth training image, is the average image of K training images. The optimal projection axis X is the eigenvector of Gt corresponding to the largest eigenvalue. A single optimal projection axis is not sufficient, so a set of optimal projection axes X1, …, Xd, must be selected corresponding to the d largest eigenvalues.

2.3 Proposed method

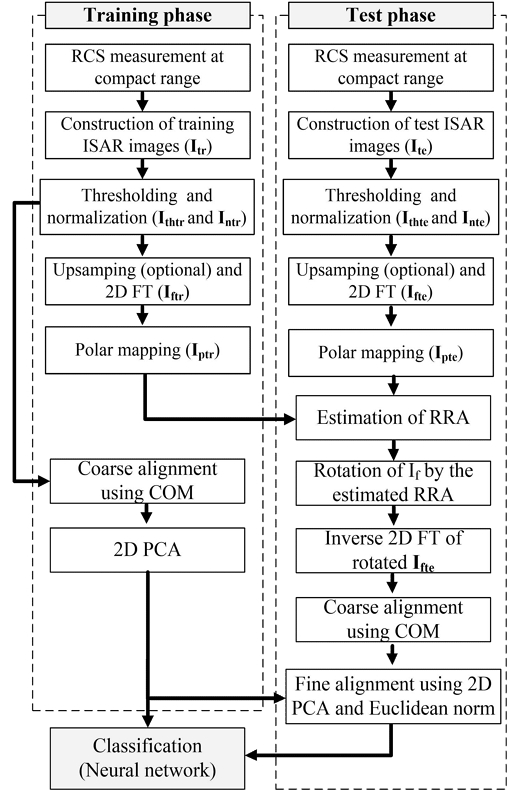

In the proposed method, classification is performed in the image domain to mitigate the impact of noise, and 2D PCA is applied to reduce the dimensionality of ISAR images. The overall process consists of the following five steps:

- 1. Preprocessing: Construction, thresholding, and normalization of ISAR images.

- 2. Fourier transform and PM: Upsampling of preprocessed images, computation of 2D Fourier Transform (FT) spectra, and generation of polar-mapped spectra.

- 3. Rotation compensation: Estimation of the Relative Rotation Angle (RRA) between training and test images using polar-mapped spectra, followed by rotation of the test image's 2D FT spectrum.

- 4. Image reconstruction and alignment: Inverse 2D FT of the rotated spectrum, coarse alignment using Center-of-Mass (COM), and fine alignment and dimensionality reduction using 2D PCA.

- 5. Classification: Final classification using a simple deep neural network.

The main contribution of the proposed method is that, by following Steps 1 through 4, a complex neural network architecture is not required, as invariance to scale, translation, and location is achieved, and the image size is significantly reduced. Therefore, a simple deep neural network is sufficient to achieve high classification accuracy.

Due to varying SNR levels, both training and test ISAR images (Itr, Ite) are thresholded using a mean threshold Th; pixels above Th are retained, and others are set to zero. To account for signal variations caused by target distance, the images are further normalized by their total energy, yielding Intr (train) and Inte.

In the second step, to smooth the 2D FT image and reduce abrupt pixel transitions, the thresholded and normalized ISAR images are up-sampled by zero-padding. As this step increases computational cost, it is optional. Then, the 2D FT images Iftr (training) and Ifte (test) are calculated using the magnitude of the 2D FT image, providing translational invariance and locating RC at (0, 0) Hz automatically.

The images Iptr (training) Ipte (test) after PM are constructed as in (1). In this step, rotation is transformed into translation in the θ direction only. In this step, Rmin need not be near 0 and Nθ must be large enough to estimate RRA accurately. In this paper, we recommend that Nθ be > 360˚ (1˚ resolution).

The third step is estimation of RRA using a simple correlation Cor(k) between Iptr and Ipte :

| (4) |

where CS[Ipte,k] is the circularly shifted image of Ipte by k in θ direction. For each image in the training database, the maximum of Cor(k)for all Nθ shifts is stored as a cost function. Then, θmax that has the highest cost function is selected as the RRA. Calculation of (4) can be shortened if it is conducted in the frequency domain as follows:

| (5) |

where FTθ[•] and IFTθ[•]are, respectively, the 1-dimensional FT and Inverse FT (IFT) in the θ direction for each m.

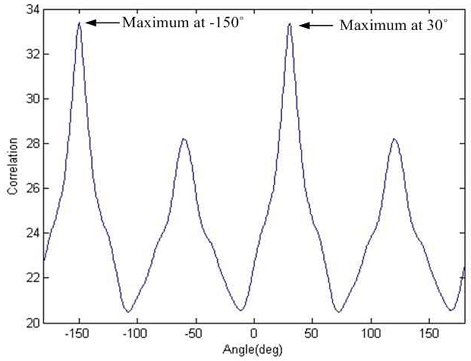

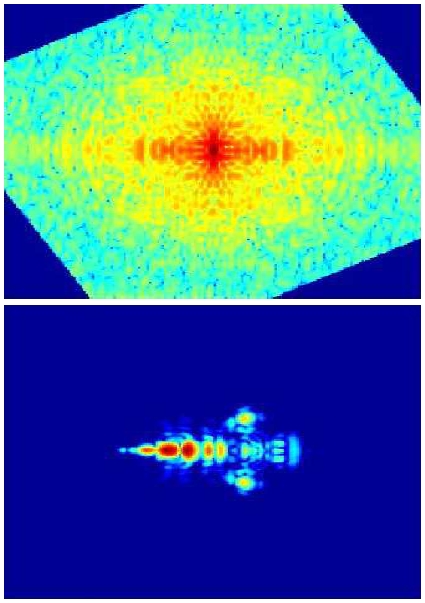

As an example, for two ISAR images of an F-117 aircraft with a relative rotation angle of 30°, a clear peak appears at 30° in the correlation profile obtained using Eq. (5) (Fig. 3). Due to the symmetry of the 2D Fourier transform of a real-valued ISAR image, an identical peak also appears at -150˚ (i.e., 30˚ - 180˚), which must also be considered during classification. Based on the estimated RRA, the test spectrum is rotated by either 30° or -150° counterclockwise. Image rotation is performed using simple linear interpolation, implemented via MATLAB’s imrotate() function (Fig. 4).

The rotated images obtained via inverse 2D Fourier transform are then coarsely aligned using the Center of Mass (COM), defined as follows:

| (6) |

where Mr and Nrr are the number of range and cross-range bins, respectively. All images are translated such that their COMs coincide. The resulting Mr × Nrr images are then compressed into Mr × d representations using 2D PCA through the following matrix multiplication:

| (7) |

However, since high-noise pixels can slightly perturb COM, fine alignment is performed by minimizing the Euclidean norm of the difference between the training and test images. By circularly shifting the test image by sr and sc in the range and cross-range directions, respectively, the optimal shifts sro and sco for fine alignment are determined as follows:

| (8) |

with

| (9) |

where Yte is the compressed test image and shift(Yte,(sc,sr)) is a test image obtained by compressing Incircularly shifted by (sc,sr) and Ytr is a compressed training image.

Finally, classification is performed using a neural network classifier consisting of two hidden layers. The first and second hidden layers each contain 100 nodes, and the output layer has 6 nodes, corresponding to the number of target classes. The network uses one-hot encoding, with the output node corresponding to the correct class set to ‘1’ and all others set to ‘0’.

IⅡ. Classification result

3.1 Classification condition

Using a 4-GHz bandwidth (8.3 - 12.3 GHz) in increments of 10 Mhz, the measured RCS data of six aircraft models, F-4, F-14, F-16, F-22, F-117, and MiG-29, were processed to generate ISAR images over aspect angles of 0° to 180° in increments of 1°. For the high cross-range resolution, ± 15° with respect to the aspect angle was used and and polar reformatting was applied [5] to yield ISAR images with Mr = Nrr = 200. Then ISAR images were upsampled to M = N = 500 by zero-padding.

For PM, the proposed method used Nr = 10 and Nθ = 360 to reduce the size of the training database, while the existing method in [5] used Nr = 50 and Nθ = 50, as these values were shown to yield near-100% recognition accuracy in [5]. To determine Rmin and Rmax, a rectangular window centered at the origin of the 2D FT spectrum was progressively widened. Rmin was set to the nearest multiple of 10 corresponding to the window width that accumulated 5% of the total spectral energy, while Rmax was set to the nearest multiple of 10 corresponding to the width containing 50% of the total energy.

For classification, a total of 1,086 ISAR images were divided into training and test sets. The training set was constructed by uniformly sampling aspect angles at 5° intervals from 0°, resulting in 37 images per target and a total of 37 × 6 = 222 training images. The remaining 864 images were used for testing. To simulate noise, Additive White Gaussian Noise (AWGN) was added to the measured RCS data to achieve target SNR levels, which were varied from 10 to 30 dB in 5 dB increments. Classification was repeated 10 times at each SNR with independently generated AWGN, and the average recognition ratio was reported as the final result.

3.2 Classification result

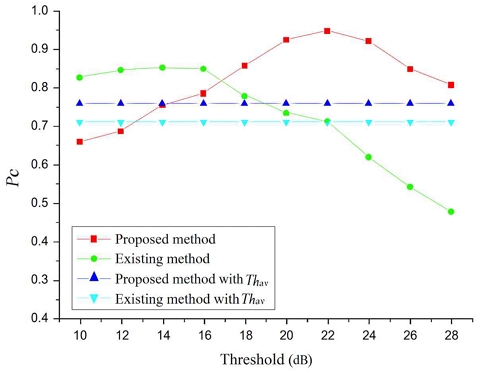

The first simulation examined the effect of the threshold value Th. Classification accuracy Pc was measured while varying Th from 10 to 28 dB in 2 dB increments at an SNR of 0 dB, with the number of projection axes set to d = 3. The results showed that Pc was highly sensitive to the choice of Th (in dB), and using the average pixel value Thav as the threshold yielded suboptimal performance (Fig. 5). The proposed method achieved the highest accuracy of 94.92% at Th = 22 dB, while the existing method [5] reached a maximum of 85.34% at Th = 16 dB. The lower performance of the existing method was attributed to normalization along the radial direction for each angular value, which amplified the influence of noise at high radial frequencies. In contrast, the proposed method was more robust to noise, as classification was performed directly on the ISAR images without frequency-domain normalization.

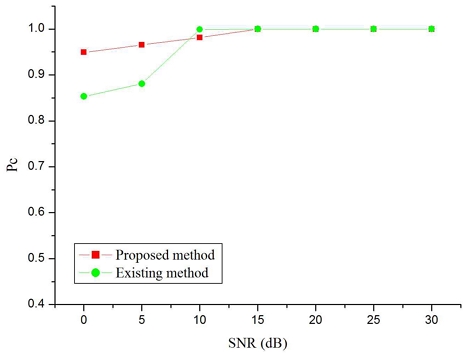

Using the optimal threshold values identified in the first simulation, a second simulation was conducted with SNR varying from 0 dB to 30 dB in 5 dB increments (Fig. 6), with 𝑑 = 3. At 0 dB and 5 dB SNR, the proposed method outperformed the existing method [5] by 9.58% and 8.47%, respectively. These results highlight the increased impact of noise on the existing method at low SNRs due to radial-direction normalization. For SNRs above 5 dB, both methods achieved nearly identical classification accuracies, approaching 100%.

The third simulation examined the effect of the number of projection axes in 2D PCA. At an SNR of 30 dB, classification was performed with 𝑑 varying from 2 to 10 in Eq. (7), in steps of 1. Except for 𝑑 = 2, which yielded Pc = 98.70 %, all other cases achieved 100% classification accuracy (figure omitted due to simplicity). These results confirm that 2D PCA can effectively reduce the size of the training database. For example, using 𝑑 = 3, the storage requirement becomes 10 × 360 (PM) + 3 × 200 (2D PCA) = 4200 elements, which corresponds to only 10.5 % of the original ISAR image size.

The final simulation was conducted to compare the sensitivity of the two methods to autofocus instability. To simulate imperfect autofocus, a two-dimensional finite impulse response (2D FIR) filter was applied to each test ISAR image, as described in [10]:

| (13) |

where In is the normalized image, Ib is the image blurred by the FIR filter, and Wij is the filter weight given by Wij = 1/L2 for i = -L,..., L and j = -L,..., L.

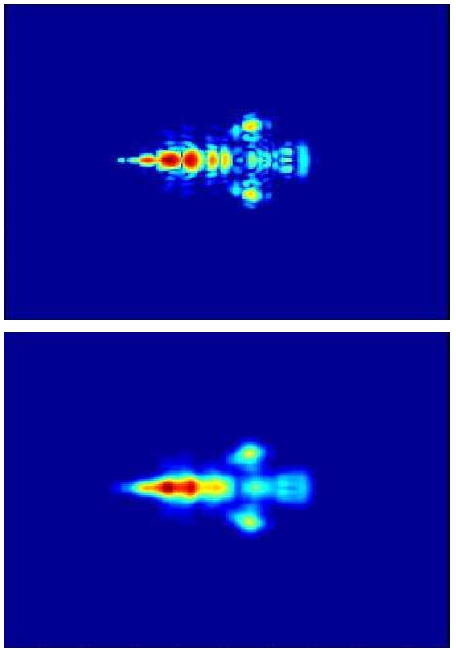

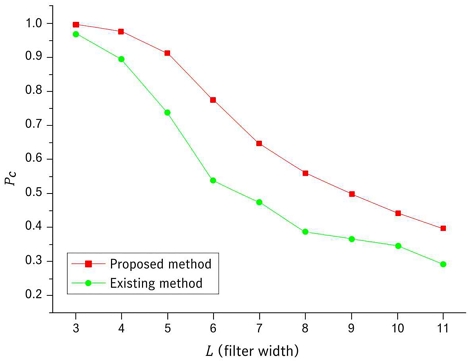

The ISAR image of the F-117 becomes significantly blurred when a 2D FIR filter with 𝐿 =7 is applied (Fig. 7), obscuring key scattering features. As 𝐿 increases from 3 to 11, the classification accuracy Pc of both methods decreases due to increased blurring (Fig. 8). The existing method [5] is more susceptible to image degradation, as normalization along the radial direction amplifies the noise effect in each angular slice. In contrast, the proposed method shows greater robustness to defocus, since classification is directly performed on the ISAR images without frequency-domain normalization.

The classification results along with the corresponding experimental parameters are summarized in Table 1. It is noteworthy that, with a properly selected Th, the proposed method consistently achieves high classification performance even with a low-dimensional feature set under low SNR conditions.

IV. Conclusion

In this paper, we proposed an efficient classification method to classify ISAR images which is invariant to translation and rotation. Simulation results using ISAR images measured in a compact range showed that the proposed method is less sensitive to noise compared to the existing approach, as reached Pc 100% for SNRs greater than 15 dB when 𝑑 and Th = 22 dB. In addition, significant data reduction was achieved through the use of 2D PCA; with 𝑑 = 2, a classification accuracy of 98% was achieved. Furthermore, the proposed method demonstrated greater robustness to image blurring, as classification is performed directly on ISAR images.

However, performance evaluation of the proposed method uses RCS data measured at a compact range. In real situations, ISAR images can be seriously contaminated by jet engine modulation, which degrades Pc. In addition, ISAR images can be distorted due to the maneuverability of a target. Therefore, the verification presented here is limited in this sense. As future work, we will test our algorithm using ISAR images from real radar data.

Acknowledgments

This work was supported by a Research Grant of Pukyong National University(2025)

References

-

C. C. Chen and H. C. Andrews, "Target-motion-induced radar imaging", IEEE Trans. Aerosp. Electron. Syst., Vol. 16, No. 1, pp. 2-14, Jan. 1980.

[https://doi.org/10.1109/TAES.1980.308873]

-

S. Musman, D. Kerr, and C. Bachmann, "Automatic recognition of ISAR ship images", IEEE Trans. Aerospace Electron. Syst., Vol. 32, No. 4, pp. 1392-1404, Oct. 1996.

[https://doi.org/10.1109/7.543860]

-

K. Stasiak, et al., "Real-time high resolution multichannel ISAR imaging system", Int. Conf. Rad. Sys., Edinburgh, UK, pp. 389-394, 2022.

[https://doi.org/10.1049/icp.2022.2349]

- R. O. Duda, P. E. Hart, and D. G. Stork, "Pattern Classification", 2nd ed. New York : Wiley, 2001.

-

M. Kim, I. O. Choi, J. H. Jung, K. T, Kim, S. H. Park, "Efficient ISAR Cross-Range Scaling Using Kalman Filter", Journal of KIIT, Vol. 16, No. 3, pp. 67-73, Mar. 2018.

[https://doi.org/10.14801/jkiit.2018.16.3.67]

-

S. H. Park, J. H. Jung, S. H. Kim, and K. T. Kim, "Efficient classification of ISAR images using 2D Fourier transform and polar mapping", IEEE Trans. Aerosp. Electron. Syst., Vol. 51, No. 3, pp. 1726-1736, Jul. 2015.

[https://doi.org/10.1109/TAES.2015.140184]

-

J. Yang, D. Zhang, A. F. Frangi, and J. Yang, "Two-dimensional PCA: a new approach to appearance-based face representation and recognition", IEEE Trans. Pattern Anal. Mach. Intell., Vol. 26, No. 1, pp. 131-137, Jan. 2004.

[https://doi.org/10.1109/TPAMI.2004.1261097]

-

M. Turk and A. Pentland, "Eigenfaces for recognition", J. Cognitive Neuroscience, Vol. 3, No. 1, pp. 71-86, Jan. 1991.

[https://doi.org/10.1162/jocn.1991.3.1.71]

-

M. A. Grudin, "On internal representations in face recognition systems", Pattern Recognition, Vol. 33, No. 7, pp. 1161-1177, Jul. 2000.

[https://doi.org/10.1016/S0031-3203(99)00104-1]

-

K.-T. Kim, D.-K. Seo, and H.-T. Kim, "Efficient radar target recognition using the MUSIC algorithm and invariant features", IEEE Trans. Antennas Propag, Vol. 50, No. 3, pp. 325-337, Mar. 2002.

[https://doi.org/10.1109/8.999623]

2012. 2 : BS degree, Dept. of Electronic Engineering, Pukyong National University

2014. 2 : MS degree, Dept. of Electronic Engineering, Pukyong National University

2020. 2 : PhD degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2019. 12 ~ 2021. 2 : Senior Researcher, Agency for Defense Development

2021. 3 ~ 2024. 2 : Assistant Professor , Division of Electronics and Electrical Information Communication Engineering, National Korea Maritime & Ocean University

2024. 3 ~ Present : Assistant Professor, Dept. of Smart Mobility Engineering, Pukyong National University

2025. 9 ~ Presenst : Vice Dean of college of information technology and convergence, Pukyong National University

Research interests : Radar target detection, dualband radar resource management, micro-Doppler analysis

2017. 2 : BS degree, Dept. of Electronic Engineering, Pukyong National University

2019. 2 : MS degree, Dept. of Electronic Engineering, Pukyong National University

2025. 2 : PhD degree, Dept. of Electronic Engineering, Pukyong National University

2019. 3 ~ 2020. 11 : Researcher, Security Fusion Technology Center, Pohang University of Science and Technology

2021. 2 ~ 2022. 8 : Researcher, Electromagnetic Wave Research Center, Kookmin University

2022. 10 ~ Present : Researcher, Security Strategy Research Center, Konkuk University Glocal Campus

Research interests : Radar target imgaging and recognition, radar signal processing, radar cross section prediction

1991. 2 : BS degree, Korea Air Force Academy

1998. 2 : MS degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2007. 2 : PhD degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2008. 12 ~ 2012. 10 : General Manager of R&D Planning, Defense Acquisition Program Administration

2012. 11 ~ 2015. 10 : Director, Defense R&D Strategy Center, Pohang University of Science and Technology

2015. 11 ~ 2018. 10 : Director, Unmanned Safety Robot Center, Korea Advanced Institute of Science and Technology

2019. 3 ~ 2020. 11 : Director, Security Fusion Technology Center, Pohang University of Science and Technology

2021. 2 ~ 2022. 8 : Director, Electromagnetic Wave Research Center, Kookmin University

2022. 10 ~ Present : Director, Security Strategy Research Center, Konkuk University Glocal Campus

Research interests : Radar target recognition, radar signal processing, and electromagnetic analysis on the wind farm by various military radars

2015. 2 : BS degree, Dept. of Electronic Engineering, Pukyong National University

2017. 2 : MS degree, Dept. of Electronic Engineering, Pukyong National University

2022. 2 : PhD degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2023. 3 ~ Present : Senior Research Scientist, Dept. of Maritime ICT and Mobility Research, Korea Institute of Ocean Science and Technology

Research interests : Radar target detection, micro-Doppler analysis, sonar detection

2004. 2 : BS degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2017. 2 : MS degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2010. 2 : PhD degree, Dept. of Electrical Engineering, Pohang University of Science and Technology

2010. 9 ~ 2012. 2 : Full-Time Instructor, Dept. of Electronic Engineering, Pukyong National University

2012. 3 ~ 2014. 2 : Assistant Professor, Dept. of Electronic Engineering, Pukyong National University

2014. 3 ~ 2018. 2 : Associate Professor, Dept. of Electronic Engineering, Pukyong National University

2018. 3 ~ Present : Professor, Dept. of Electronic Engineering, Pukyong National University

Research interests : Radar target detection, micro-Doppler analysis, sonar detection